A Roadmap for KV Cache Offloading at Scale

GPU HBM can't scale vertically fast enough to match the explosive growth of the KV cache, driven by longer context windows, multi-turn sessions, and agentic workloads that treat inference state as persistent rather than ephemeral. The solution, now adopted broadly across inference engines like sglang and vLLM, is to relieve pressure by offloading the KV cache.

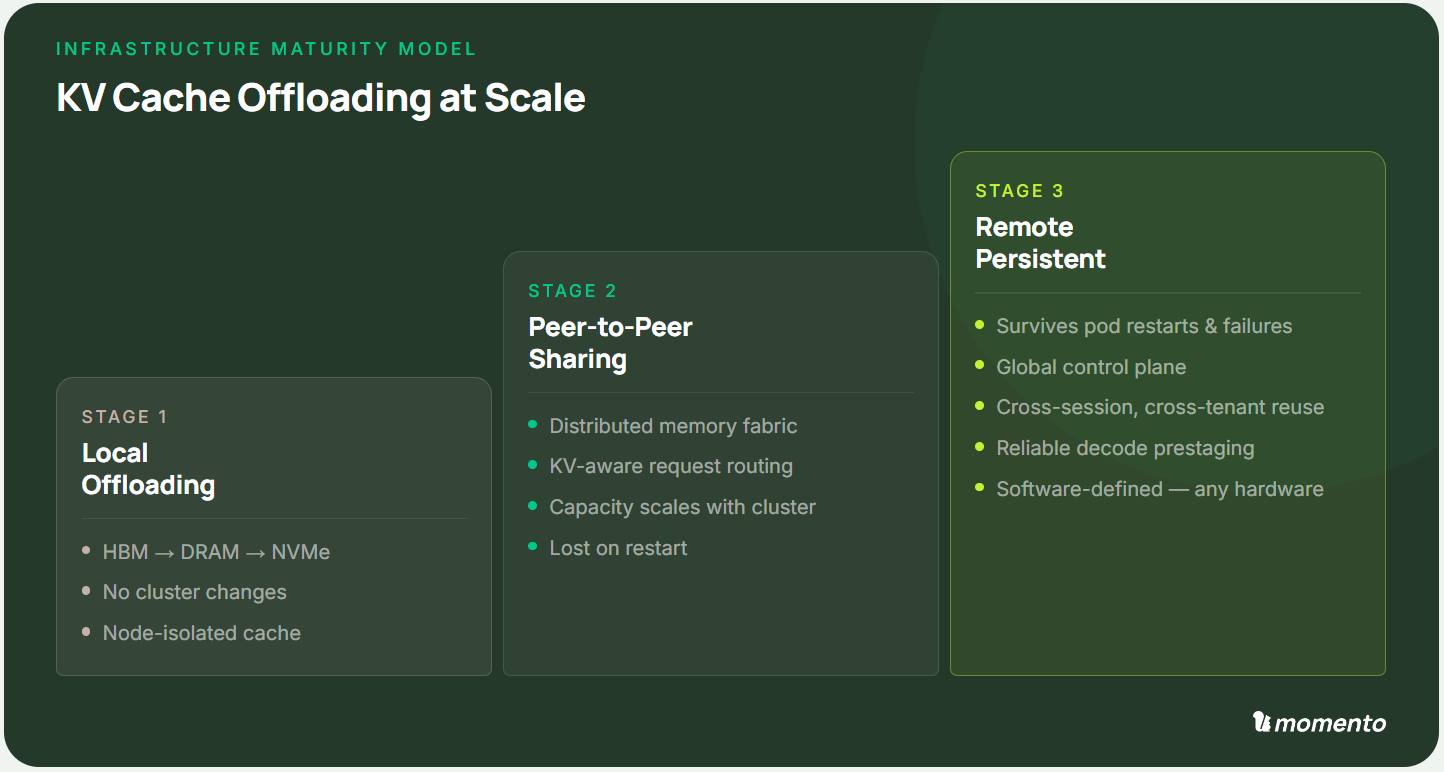

Moving KV blocks further from the GPU introduces complex considerations for latency, throughput, and cost. Yet, the engineering challenge is not whether to offload, but how far and with what degree of coordination. Below, we present a three-stage maturity framework for KV caching to guide the incremental evolution of your inference stack.

Stage 1: Local Offloading

The first maturity level extends KV capacity beyond HBM by spilling evicted blocks onto the same physical host. When HBM fills under sustained concurrency, the runtime evicts blocks to host DRAM. When DRAM fills, blocks spill further to locally attached NVMe storage. The process reverses during prefetch, where the runtime stages blocks back toward the GPU ahead of the decode phase.

This technique works with node-local access paths over standard file system interfaces. No changes are required to cluster networking, routing infrastructure, or inference runtime configuration. You can deploy this on existing hardware with minimal operational risk.

And the gains are real. Effective KV capacity per node increases by orders of magnitude. Warm contexts that would otherwise be evicted and recomputed remain accessible. For single-node deployments or small fleets with short context windows, this simple built-in feature is often sufficient.

The ceiling, however, is clear. Even terabytes of local cache fill up over time, and each node's cache is invisible to the rest of the cluster. When the same document is ingested by ten users routed to ten different machines, each node computes and caches the same KV blocks independently. As your fleet grows, per-node cache hit rates fall, and the compute wasted on redundant prefill grows accordingly.

Stage 2: Peer-to-Peer Sharing

The second maturity level treats the cluster's aggregate memory as a single shared resource. Rather than each node maintaining its own isolated cache, a distributed memory fabric exposes KV blocks across all nodes simultaneously. Any instance can read blocks that were computed elsewhere, regardless of which node holds them.

The mechanics of peer-to-peer caching requires two things to work well. First, you need an optimized data transfer layer. High-speed interconnects like PCIe or RDMA provide a boost, of course, although an optimized networking stack can achieve excellent performance over TCP on a typical datacenter network. Look for implementation details like zero-copy transmission, high-concurrency threading, real-time compression, pipelining, and tuned buffers.

Second, the request router must understand cache state. A router that ignores KV locality will frequently send requests to nodes that hold none of the relevant blocks, paying full prefill cost when a smarter routing decision would have found a cache hit. A KV-aware gateway estimates the prefill cost of serving each request on each candidate node and routes accordingly.

When both components work together, the results shift substantially. Cache capacity scales linearly with cluster size. Cross-node cache hits approach on-GPU latency for long-context workloads as the transfer layer quickly relocates cache blocks. Throughput gains from scaling out compound rather than plateau.

The remaining limitation is persistence. This shared memory fabric lives in RAM and local disks. When any node restarts, it tears a hole in the cluster's collective memory. And when the cluster restarts, all cached context is lost. For workloads that span sessions, this is a fundamental gap. An agentic system that relies on cached context from a prior session will find nothing in the pool and must recompute from scratch.

Stage 3: Remote Persistent Storage

The third and final maturity level makes KV cache durable and active. Rather than treating cached blocks as volatile objects that exist only in memory, this tier persists them to shared storage that survives pod restarts, node upgrades, and cluster-wide failures. Context accumulated during one session becomes available for reuse in the next.

The architecture shift is significant. KV data is managed as a distinct storage class, separate from both GPU memory and local disks. Platform-level orchestration takes responsibility for deciding where each block lives, when it is evicted, and how it is staged back toward the GPU. These decisions move out of the inference runtime and into a dedicated global control plane.

One critical implementation question is whether you need specialized hardware to successfully leverage a global, orchestrated cache. Proprietary accelerators and specialized networking hardware can deliver impressive raw performance, but they are expensive, supply-constrained, and lock operators into specific vendor ecosystems.

Fortunately, our testing has proven that a fully software-defined architecture optimized for standard cloud servers and network infrastructure is sufficient. This unlocks a flexible, low-risk approach via rapid deployment across any hardware.

With durable remote storage and active orchestration in place, the benefits accumulate across the entire fleet. Multi-session multi-node reuse becomes the norm, while reliable prestaging eliminates decode stalls.

Choosing Where to Start

The stages of this maturity model are not mutually exclusive phases. They represent different points on a tradeoff curve between complexity, cost, and capability.

A small fleet running short-context workloads on a handful of nodes will find local caching sufficient. A mid-size deployment with high prefix reuse across many concurrent users will benefit from the shared P2P memory pool. A large fleet running agentic workloads across sessions, tenants, and deployments will require the sophisticated durability of global cache orchestration.

KV cache management has evolved from an implementation detail inside inference engines to a discipline that spans networking, storage, and orchestration. This maturity model attempts to outline a clear path forwards, as each stage unlocks new capabilities through small, incremental investments. Ultimately, inference is evolving and so too must your infrastructure.

At Momento, we're excited to enable this next frontier of AI through efficient, hyperscale caching. Follow along at our blog, or reach out if you'd like to collaborate on testing new ideas.